Getting Started¶

In this project, you will analyze a dataset containing data on various customers' annual spending amounts (reported in monetary units) of diverse product categories for internal structure. One goal of this project is to best describe the variation in the different types of customers that a wholesale distributor interacts with. Doing so would equip the distributor with insight into how to best structure their delivery service to meet the needs of each customer.

The dataset for this project can be found on the UCI Machine Learning Repository. For the purposes of this project, the features 'Channel' and 'Region' will be excluded in the analysis — with focus instead on the six product categories recorded for customers.

Run the code block below to load the wholesale customers dataset, along with a few of the necessary Python libraries required for this project. You will know the dataset loaded successfully if the size of the dataset is reported.

# Import libraries necessary for this project

import numpy as np

import pandas as pd

from IPython.display import display # Allows the use of display() for DataFrames

# Import supplementary visualizations code visuals.py

import visuals as vs

# Pretty display for notebooks

%matplotlib inline

# Load the wholesale customers dataset

try:

data = pd.read_csv("customers.csv")

data.drop(['Region', 'Channel'], axis = 1, inplace = True)

print "Wholesale customers dataset has {} samples with {} features each.".format(*data.shape)

except:

print "Dataset could not be loaded. Is the dataset missing?"

Data Exploration¶

In this section, you will begin exploring the data through visualizations and code to understand how each feature is related to the others. You will observe a statistical description of the dataset, consider the relevance of each feature, and select a few sample data points from the dataset which you will track through the course of this project.

Run the code block below to observe a statistical description of the dataset. Note that the dataset is composed of six important product categories: 'Fresh', 'Milk', 'Grocery', 'Frozen', 'Detergents_Paper', and 'Delicatessen'. Consider what each category represents in terms of products you could purchase.

# Display a description of the dataset

display(data.describe())

Implementation: Selecting Samples¶

To get a better understanding of the customers and how their data will transform through the analysis, it would be best to select a few sample data points and explore them in more detail. In the code block below, add three indices of your choice to the indices list which will represent the customers to track. It is suggested to try different sets of samples until you obtain customers that vary significantly from one another.

# TODO: Select three indices of your choice you wish to sample from the dataset

## me: randomly generate three rows

from random import randint, seed

seed(12)

indices = [randint(1, data.shape[0]+1) for i in range(3)]

# Create a DataFrame of the chosen samples

samples = pd.DataFrame(data.loc[indices], columns = data.keys()).reset_index(drop = True)

print "Chosen samples of wholesale customers dataset:"

display(samples)

Question 1¶

Consider the total purchase cost of each product category and the statistical description of the dataset above for your sample customers.

What kind of establishment (customer) could each of the three samples you've chosen represent?

Hint: Examples of establishments include places like markets, cafes, and retailers, among many others. Avoid using names for establishments, such as saying "McDonalds" when describing a sample customer as a restaurant.

print('Chosen samples offset from mean of dataset:')

display(samples - np.round(data.mean()))

print('Chosen samples offset from median of dataset:')

display(samples - np.round(data.median()))

print('Quantile Visualization:')

percentile = 100 * data.rank(pct = True).loc[indices].round(decimals=2) # Computes percentage rank of data

display(percentile)

import seaborn as sns

sns.heatmap(percentile, vmin=1, vmax=99, annot=True)

## below is the code for previous answer.

# total purchase for each product category

#display(samples.sum(axis=0))

# show the percentage of each customer over the sum

#samples.apply(lambda x: x*1.0/np.sum(x), axis=1)

Answer:

In order to determine which kind of establishment each sample represent, we need compare the feature value of each sample to the overall statistical description.

- Customer 1: It has high spending in Fresh and Delicatessen, low spending in Milk, Grocery and Detergents_Paper, medium spending in Frozen. Hence it would be a Food retailer.

- Customer 2: It has low spending Fresh, Milk, Grocery, Frozen, and medium spending in Detergents_Paper and Delicatessen. Almost all categories have small and balanced spending, might be a small restaurant.

- Customer 3: It has high spending in Fresh, Grocery and Delicatessen, low spending in Milk, Frozen, medium spending in Detergents_Paper. It might also be a Food retailer.

Implementation: Feature Relevance¶

One interesting thought to consider is if one (or more) of the six product categories is actually relevant for understanding customer purchasing. That is to say, is it possible to determine whether customers purchasing some amount of one category of products will necessarily purchase some proportional amount of another category of products? We can make this determination quite easily by training a supervised regression learner on a subset of the data with one feature removed, and then score how well that model can predict the removed feature.

In the code block below, you will need to implement the following:

- Assign

new_dataa copy of the data by removing a feature of your choice using theDataFrame.dropfunction. - Use

sklearn.cross_validation.train_test_splitto split the dataset into training and testing sets.- Use the removed feature as your target label. Set a

test_sizeof0.25and set arandom_state.

- Use the removed feature as your target label. Set a

- Import a decision tree regressor, set a

random_state, and fit the learner to the training data. - Report the prediction score of the testing set using the regressor's

scorefunction.

# TODO: Make a copy of the DataFrame, using the 'drop' function to drop the given feature

new_data = data.copy()

Fresh_raw = new_data['Grocery']

new_data.drop(['Grocery'], axis=1, inplace = True) # note without inplace, the original data is not updated?

# TODO: Split the data into training and testing sets using the given feature as the target

from sklearn.cross_validation import train_test_split

from sklearn.linear_model import LinearRegression

from sklearn.tree import DecisionTreeRegressor

from sklearn.metrics import r2_score

X_train, X_test, y_train, y_test = train_test_split(new_data, Fresh_raw, test_size = 0.25, random_state = 0)

# TODO: Create a decision tree regressor and fit it to the training set

regressor = DecisionTreeRegressor(criterion='mse', random_state=0)

regressor.fit(X_train, y_train)

# TODO: Report the score of the prediction using the testing set

y_pred = regressor.predict(X_test)

score = regressor.score(X_test, y_test) # r2_score(y_test, y_pred) # also you can use r2_score() to compute r2

score

correlations = data.corr()

print "Correlation matrix between features:"

display(correlations)

# use searborn

sns.heatmap(correlations, vmin=-1, vmax=1, annot=True)

## Below is the code plot correlation without seaborn. Note after import seaborn, the default plot would be changed

# plot correlation matrix

# import matplotlib.pyplot as plt

# fig = plt.figure()

# ax = fig.add_subplot(111) # stands for subplot(1,1,1)

# cax = ax.matshow(correlations, vmin=-1, vmax=1) #

# fig.colorbar(cax)

# ticks = np.arange(0,6,1)

# ax.set_xticks(ticks)

# ax.set_yticks(ticks)

# ax.set_xticklabels(data.columns.values)

# ax.set_yticklabels(data.columns.values)

# plt.title('Plot of Correlation Matrix')

# plt.show()

Select the Grocery as response, we get r square to be 0.60, which means the model can explain more than 60% of the variation in Grocery. In other words, Grocery is correlated with some of the other features.

Also, we can compute the correplation matrix directly. From the matrix and visualization, Milk, Grocery and Detergents_Paper are more correlated each other than other features. The most significant correlation is between Grocery and Detergents_Paper.

Question 2¶

Which feature did you attempt to predict? What was the reported prediction score? Is this feature is necessary for identifying customers' spending habits?

Hint: The coefficient of determination, R^2, is scored between 0 and 1, with 1 being a perfect fit. A negative R^2 implies the model fails to fit the data.

Answer:

In Question 1, we selected the Grocery as response, we get r square to be 0.60, which means the model can explain more than 60% of the variation in Grocery. In other words, Grocery is correlated with some of the other features. From the previous result, we know that the most significant correlation is between Grocery and Detergents_Paper.

The feature Grocery is not good for identifying customers' spending habits, as it is correlated with other features. So this single feature can't be used to identify customers' spending habits. We will do PCA later to reduce the dimensionality (the correlated features have close loading weights in principal components).

Visualize Feature Distributions¶

To get a better understanding of the dataset, we can construct a scatter matrix of each of the six product features present in the data. If you found that the feature you attempted to predict above is relevant for identifying a specific customer, then the scatter matrix below may not show any correlation between that feature and the others. Conversely, if you believe that feature is not relevant for identifying a specific customer, the scatter matrix might show a correlation between that feature and another feature in the data. Run the code block below to produce a scatter matrix.

# Produce a scatter matrix for each pair of features in the data

pd.scatter_matrix(data, alpha = 0.3, figsize = (14,8), diagonal = 'kde');

print('Skewness of each feature:')

pd.DataFrame.skew(data, axis=0)

Question 3¶

Are there any pairs of features which exhibit some degree of correlation? Does this confirm or deny your suspicions about the relevance of the feature you attempted to predict? How is the data for those features distributed?

Hint: Is the data normally distributed? Where do most of the data points lie?

Answer:

There are several paris of features exhibit correlations, such as Milk and Grocery, Milk and Detergents_Paper. It confirms my suspicions.

All these three features are not normally distributed. Most of the points lie on the left, closer to the minimal value. We can see the skewness of all variables are greater than 1, indicates they are righ skewed.

import matplotlib.pyplot as plt

def hist_plot(column):

fig = plt.figure()

ax = fig.add_subplot(111) # stands for subplot(1,1,1)

ax.hist(data[column], bins=25)

plt.title('Histgram plot of ' + column)

plt.show()

columns = ['Milk', 'Grocery', 'Detergents_Paper']

for c in columns:

hist_plot(c)

Data Preprocessing¶

In this section, you will preprocess the data to create a better representation of customers by performing a scaling on the data and detecting (and optionally removing) outliers. Preprocessing data is often times a critical step in assuring that results you obtain from your analysis are significant and meaningful.

Implementation: Feature Scaling¶

If data is not normally distributed, especially if the mean and median vary significantly (indicating a large skew), it is most often appropriate to apply a non-linear scaling — particularly for financial data. One way to achieve this scaling is by using a Box-Cox test, which calculates the best power transformation of the data that reduces skewness. A simpler approach which can work in most cases would be applying the natural logarithm.

In the code block below, you will need to implement the following:

- Assign a copy of the data to

log_dataafter applying logarithmic scaling. Use thenp.logfunction for this. - Assign a copy of the sample data to

log_samplesafter applying logarithmic scaling. Again, usenp.log.

# TODO: Scale the data using the natural logarithm

log_data = data.apply(np.log)

# TODO: Scale the sample data using the natural logarithm

log_samples = samples.apply(np.log) # this is the same as np.log(samples)

# Produce a scatter matrix for each pair of newly-transformed features

pd.scatter_matrix(log_data, alpha = 0.3, figsize = (14,8), diagonal = 'kde');

# do boxcox transformation

from scipy import stats

def boxcox_(x):

x_t, _ = stats.boxcox(x)

return x_t

boxcox_data = data.apply(boxcox_, axis=0)

pd.scatter_matrix(boxcox_data, alpha = 0.3, figsize = (14,8), diagonal = 'kde');

I did boxcox transformation also. It looks boxcox makes the feature more normal than log transformation. E.g. Fresh, Delicatessen are more normal distributed.

Observation¶

After applying a natural logarithm scaling to the data, the distribution of each feature should appear much more normal. For any pairs of features you may have identified earlier as being correlated, observe here whether that correlation is still present (and whether it is now stronger or weaker than before).

Run the code below to see how the sample data has changed after having the natural logarithm applied to it.

# Display the log-transformed sample data

display(log_samples)

display(data.describe())

Implementation: Outlier Detection¶

Detecting outliers in the data is extremely important in the data preprocessing step of any analysis. The presence of outliers can often skew results which take into consideration these data points. There are many "rules of thumb" for what constitutes an outlier in a dataset. Here, we will use Tukey's Method for identfying outliers: An outlier step is calculated as 1.5 times the interquartile range (IQR). A data point with a feature that is beyond an outlier step outside of the IQR for that feature is considered abnormal.

In the code block below, you will need to implement the following:

- Assign the value of the 25th percentile for the given feature to

Q1. Usenp.percentilefor this. - Assign the value of the 75th percentile for the given feature to

Q3. Again, usenp.percentile. - Assign the calculation of an outlier step for the given feature to

step. - Optionally remove data points from the dataset by adding indices to the

outlierslist.

NOTE: If you choose to remove any outliers, ensure that the sample data does not contain any of these points!

Once you have performed this implementation, the dataset will be stored in the variable good_data.

# For each feature find the data points with extreme high or low values

# both np.percentile and pandas.Series.quantile works

outliers_all = {} # a dictionary to store outliers for each feature

for feature in log_data.keys():

# TODO: Calculate Q1 (25th percentile of the data) for the given feature

Q1 = np.percentile(log_data[feature], 25)

# TODO: Calculate Q3 (75th percentile of the data) for the given feature

Q3 = log_data[feature].quantile(0.75)

# TODO: Use the interquartile range to calculate an outlier step (1.5 times the interquartile range)

step = 1.5 * (Q3 - Q1)

# Display the outliers

print "Data points considered outliers for the feature '{}':".format(feature)

outliers_all[feature] = log_data[((log_data[feature] <= Q1 - step) | (log_data[feature] >= Q3 + step))].index.tolist()

display(log_data[~((log_data[feature] >= Q1 - step) & (log_data[feature] <= Q3 + step))])

# display(log_data[((log_data[feature] <= Q1 - step) | (log_data[feature] >= Q3 + step))]) # is the same

# OPTIONAL: Select the indices for data points you wish to remove

# As number of outliers are too big compared to total size, I select the indices for extreme outliers

extreme_outliers = []

for feature in log_data.keys():

Q1 = np.percentile(log_data[feature], 25)

Q3 = log_data[feature].quantile(0.75)

step = 3 * (Q3 - Q1)

extreme_outlier_tmp = log_data[((log_data[feature] <= Q1 - step) | (log_data[feature] >= Q3 + step))].index.tolist()

for e in extreme_outlier_tmp:

if e not in extreme_outliers:

extreme_outliers.append(e)

As number of outliers are too big compared to total size, I also found out indices for extreme outliers according to Tukey's method.

display(extreme_outliers)

# detect the outliers appeared in more than one feature

outlier_MoreThanOneFeature = []

for i in range(len(log_data.keys())-1):

a = outliers_all[log_data.keys()[i]]

for j in range(i+1, len(log_data.keys())):

b = outliers_all[log_data.keys()[j]]

outlier_MoreThanOneFeature.extend(filter(lambda x: x in a, b))

outlier_MoreThanOneFeature = list(set(outlier_MoreThanOneFeature))

print('Outliers detected in more than one feature: {}'.format(outlier_MoreThanOneFeature))

# Remove the outliers, if any were specified

# Actually what we removed are the outliers which are detected in more than one feature.

good_data = log_data.drop(log_data.index[outlier_MoreThanOneFeature]).reset_index(drop = True)

Question 4¶

Are there any data points considered outliers for more than one feature based on the definition above? Should these data points be removed from the dataset? If any data points were added to the outliers list to be removed, explain why.

Answer:

There are 5 points considered outliers for more than one features. For example, the 65th record is outlier in both Fresh and Frozen.

As we showed before, for each feature there are different outliers. If we remove all of them, we will lose a lot of information within the data. Although those points are outliers according to the definition of outlier, we should be very careful in removing them. Only if you have domain knowledge and are confident those points are not reliable, then you can remove them.

In this dataset, as the number of outliers is too big compared to the total data size, I just selected and removed these five outliers which detected in more than one feature.

Feature Transformation¶

In this section you will use principal component analysis (PCA) to draw conclusions about the underlying structure of the wholesale customer data. Since using PCA on a dataset calculates the dimensions which best maximize variance, we will find which compound combinations of features best describe customers.

Implementation: PCA¶

Now that the data has been scaled to a more normal distribution and has had any necessary outliers removed, we can now apply PCA to the good_data to discover which dimensions about the data best maximize the variance of features involved. In addition to finding these dimensions, PCA will also report the explained variance ratio of each dimension — how much variance within the data is explained by that dimension alone. Note that a component (dimension) from PCA can be considered a new "feature" of the space, however it is a composition of the original features present in the data.

In the code block below, you will need to implement the following:

- Import

sklearn.decomposition.PCAand assign the results of fitting PCA in six dimensions withgood_datatopca. - Apply a PCA transformation of the sample log-data

log_samplesusingpca.transform, and assign the results topca_samples.

Question 5¶

How much variance in the data is explained in total by the first and second principal component? What about the first four principal components? Using the visualization provided above, discuss what the first four dimensions best represent in terms of customer spending.

Hint: A positive increase in a specific dimension corresponds with an increase of the positive-weighted features and a decrease of the negative-weighted features. The rate of increase or decrease is based on the indivdual feature weights.

# TODO: Apply PCA by fitting the good data with the same number of dimensions as features

from sklearn.decomposition import PCA

pca = PCA(n_components = 6)

pca.fit(good_data)

# TODO: Transform the sample log-data using the PCA fit above

pca_samples = pca.transform(log_samples)

# Generate PCA results plot

pca_results = vs.pca_results(good_data, pca)

# variance explained by first two components

print(sum(pca.explained_variance_ratio_[0:2]))

print(sum(pca.explained_variance_ratio_[0:4]))

Answer:

The first and second principal component can explain 70.68% of the total variance; the first four can explain 93.11%.

For PC1, Milk, Grocery, Detergents_Paper and Delicatessen are positively correlated. The cusotmer with high spending in one of the category would has high spending in other three as well. It best represent the client like cafe.

For PC2, Fresh, Frozen, Delicatessen are positively correlated. It best represent the client like restaurant.

For PC3, Delicatessen and Frozen are positively correlated, while negatively correlated with Fresh and Detergents_Paper. It best represent the client like Fresh retailers and Delicatessen retailers, as those retailers usually focus one of Fresh or Delicatessen food, and those two food are negative correlated in PC3.

For PC4, Delicatessen and Frozen are negative correlated, similar to PC3 we can use PC4 to represent Delicateseen retailers and Frozen retailers.

We should note that each principal component itself doesn't represent a specific type of customer. As each principal component is a projection of the original data, we can think they are new features. So each principal component score just represents one feaure of a customer, it can're represnent a type of customer.

But a high positive/negative value along the PCA dimension can help differentiate between different type of customers. For example, the customers with large scores on the first principal component have large spending in Detergents_Paper, Milk and Grocery; while the customers with large scores on the second principal component have large spending in Fresh, Frozen and Delicatessen. This information can help us differentiate different customers.

Observation¶

Run the code below to see how the log-transformed sample data has changed after having a PCA transformation applied to it in six dimensions. Observe the numerical value for the first four dimensions of the sample points. Consider if this is consistent with your initial interpretation of the sample points.

# Display sample log-data after having a PCA transformation applied

display(pd.DataFrame(np.round(pca_samples, 4), columns = pca_results.index.values))

Implementation: Dimensionality Reduction¶

When using principal component analysis, one of the main goals is to reduce the dimensionality of the data — in effect, reducing the complexity of the problem. Dimensionality reduction comes at a cost: Fewer dimensions used implies less of the total variance in the data is being explained. Because of this, the cumulative explained variance ratio is extremely important for knowing how many dimensions are necessary for the problem. Additionally, if a signifiant amount of variance is explained by only two or three dimensions, the reduced data can be visualized afterwards.

In the code block below, you will need to implement the following:

- Assign the results of fitting PCA in two dimensions with

good_datatopca. - Apply a PCA transformation of

good_datausingpca.transform, and assign the results toreduced_data. - Apply a PCA transformation of the sample log-data

log_samplesusingpca.transform, and assign the results topca_samples.

# TODO: Apply PCA by fitting the good data with only two dimensions

pca = PCA(n_components=2)

pca.fit(good_data)

# TODO: Transform the good data using the PCA fit above

reduced_data = pca.transform(good_data)

# TODO: Transform the sample log-data using the PCA fit above

pca_samples = pca.transform(log_samples)

# Create a DataFrame for the reduced data

reduced_data = pd.DataFrame(reduced_data, columns = ['Dimension 1', 'Dimension 2'])

Observation¶

Run the code below to see how the log-transformed sample data has changed after having a PCA transformation applied to it using only two dimensions. Observe how the values for the first two dimensions remains unchanged when compared to a PCA transformation in six dimensions.

# Display sample log-data after applying PCA transformation in two dimensions

display(pd.DataFrame(np.round(pca_samples, 4), columns = ['Dimension 1', 'Dimension 2']))

Visualizing a Biplot¶

A biplot is a scatterplot where each data point is represented by its scores along the principal components. The axes are the principal components (in this case Dimension 1 and Dimension 2). In addition, the biplot shows the projection of the original features along the components. A biplot can help us interpret the reduced dimensions of the data, and discover relationships between the principal components and original features.

Run the code cell below to produce a biplot of the reduced-dimension data.

# Create a biplot

vs.biplot(good_data, reduced_data, pca)

Observation¶

Once we have the original feature projections (in red), it is easier to interpret the relative position of each data point in the scatterplot. For instance, a point the lower right corner of the figure will likely correspond to a customer that spends a lot on 'Milk', 'Grocery' and 'Detergents_Paper', but not so much on the other product categories.

From the biplot, which of the original features are most strongly correlated with the first component? What about those that are associated with the second component? Do these observations agree with the pca_results plot you obtained earlier?

Answer:

First principal component: $Z_1=\phi_{11}X_1+\phi_{21}+...+\phi_{p1}X_p$, where $Z_1$ is principal component score, and $\phi_{i1}$ is the loading of the first principal component.

In the biplot, the x axis is the score of the first principal component (the value of the transformed new feature), the y axis the that of the second principal component. The arrows are the loading vectors which used to compute the principal components (If we check the visualization code we see arrow_size are multipled by loading vector). Each loading vectors are mutually orthogonal with others. However as we can only see the first two dimensions of these vectors, so the arrows in 2D plots aren't orthogonal / perpendicular, however they definitely are in high-dimension space.

In the first component, the Detergents_Paper, Milk and Grocery are the most strongly correlated with original features. In second component, Fresh and Frozen are the strongest. Those are all consistent with the pca_results plot.

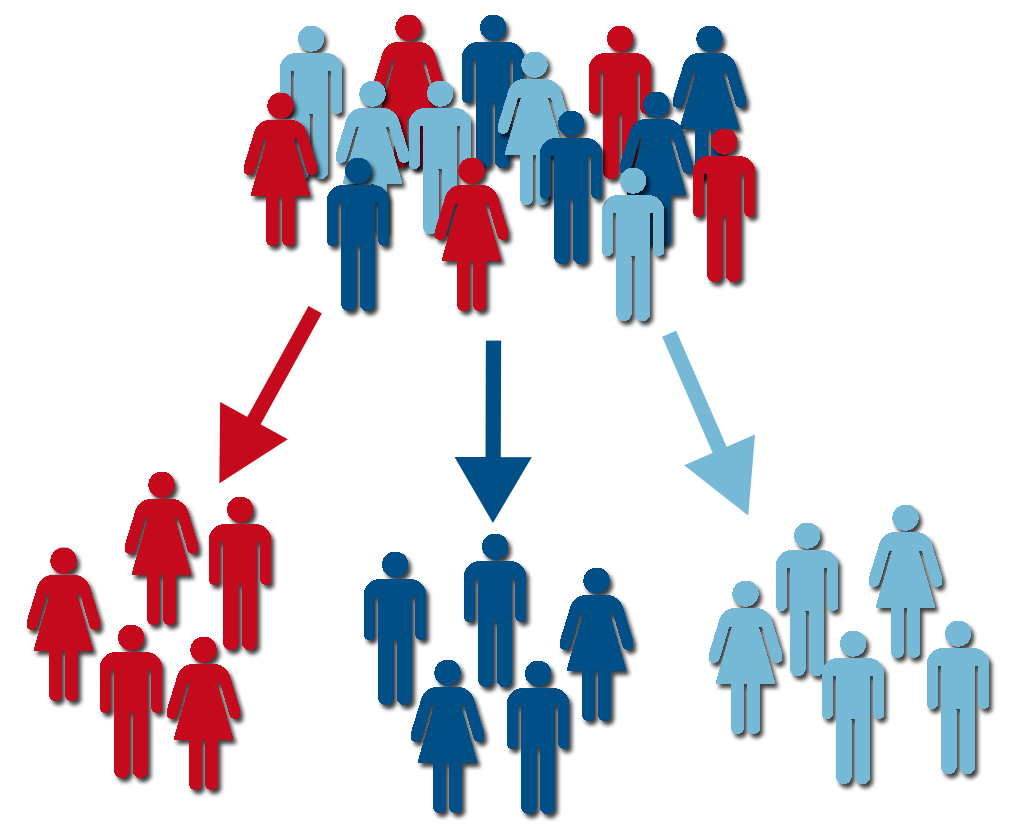

Clustering¶

In this section, you will choose to use either a K-Means clustering algorithm or a Gaussian Mixture Model clustering algorithm to identify the various customer segments hidden in the data. You will then recover specific data points from the clusters to understand their significance by transforming them back into their original dimension and scale.

Question 6¶

What are the advantages to using a K-Means clustering algorithm? What are the advantages to using a Gaussian Mixture Model clustering algorithm? Given your observations about the wholesale customer data so far, which of the two algorithms will you use and why?

Answer:

K-Means clustering algorithm: fast and easy to understand. It classifys each point into one cluster through iteratively updating the cluster of each point belongs to and the center of each cluster. As use Euclidean distance as measure, K-Means works good at identifying clusters of spherical shape.

Gaussian Mixture Model clustering (GMM): each point can belong to multiple clusters with different probabilities, which is also called "soft clustering". It iterately updates the latent variable and maximize the likehood. Compared to K-Means, GMM could work better on some non-spherical shape dataset, and the clusters which share a fair amount of overlap in reality. Also, the soft clustering is more flexible. Actually K-Means can be regarded as a simplified versiom of GMM (EM algorithm).

Disadvantages: Although both K-Means and EM algorithm are guaranteed to converge, but they only converged to local optimal. So do multiple implementation with different initials could get better result. For GMM, it's more computational expensive than K-Means.

For each dataset, from the visualization of first two PCA components, the points are not spread out in different clusters intuitively. If we just look at the data, the Gaussian Mixture Modeling could be better. However, since we know the data is about the type of different clients, that is each client has only one type. So we choose K-Means clustering here. Also, as K-Means is faster than GMM, we do K-Means first as preliminary analysis.

Implementation: Creating Clusters¶

Depending on the problem, the number of clusters that you expect to be in the data may already be known. When the number of clusters is not known a priori, there is no guarantee that a given number of clusters best segments the data, since it is unclear what structure exists in the data — if any. However, we can quantify the "goodness" of a clustering by calculating each data point's silhouette coefficient. The silhouette coefficient for a data point measures how similar it is to its assigned cluster from -1 (dissimilar) to 1 (similar). Calculating the mean silhouette coefficient provides for a simple scoring method of a given clustering.

In the code block below, you will need to implement the following:

- Fit a clustering algorithm to the

reduced_dataand assign it toclusterer. - Predict the cluster for each data point in

reduced_datausingclusterer.predictand assign them topreds. - Find the cluster centers using the algorithm's respective attribute and assign them to

centers. - Predict the cluster for each sample data point in

pca_samplesand assign themsample_preds. - Import

sklearn.metrics.silhouette_scoreand calculate the silhouette score ofreduced_dataagainstpreds.- Assign the silhouette score to

scoreand print the result.

- Assign the silhouette score to

from sklearn.cluster import KMeans

# TODO: Apply your clustering algorithm of choice to the reduced data

clusterer = KMeans(n_clusters=2, random_state=0).fit(reduced_data)

# TODO: Predict the cluster for each data point

preds = clusterer.predict(reduced_data)

# TODO: Find the cluster centers

centers = clusterer.cluster_centers_

# TODO: Predict the cluster for each transformed sample data point

sample_preds = clusterer.predict(pca_samples)

# TODO: Calculate the mean silhouette coefficient for the number of clusters chosen

score = {}

from sklearn.metrics import silhouette_score

for n in range(10, 1, -1):

clusterer = KMeans(n_clusters=n, random_state=0).fit(reduced_data)

preds = clusterer.predict(reduced_data)

centers = clusterer.cluster_centers_

score_n = silhouette_score(reduced_data, preds, metric='euclidean')

print 'Silhouette score for {} clusters: {}'.format(n, score_n)

score[n] = silhouette_score(reduced_data, preds, metric='euclidean')

def KeyWithMaxValue(dic):

k = dic.keys()

v = dic.values()

return v[v.index(max(v))]

best_N = KeyWithMaxValue(score)

#best_N = max(score.iterkeys(), key=lambda k: score[k]) # this method also works

print 'Maximal Silhouette score is achieved when number of cluster N = {}'.format(best_N)

Question 7¶

Report the silhouette score for several cluster numbers you tried. Of these, which number of clusters has the best silhouette score?

Answer:

Different cluster numbers from 2 to 8 are tried, 2-clusters has the best(largest) sihouette score.

Cluster Visualization¶

Once you've chosen the optimal number of clusters for your clustering algorithm using the scoring metric above, you can now visualize the results by executing the code block below. Note that, for experimentation purposes, you are welcome to adjust the number of clusters for your clustering algorithm to see various visualizations. The final visualization provided should, however, correspond with the optimal number of clusters.

# Display the results of the clustering from implementation

print('K-Means Clustering:')

vs.cluster_results(reduced_data, preds, centers, pca_samples)

# fit Gaussian Mixture Model

from sklearn.mixture import GMM

g = GMM(n_components=2)

g.fit(reduced_data)

preds_GMM = g.predict(reduced_data)

centers_GMM = g.means_

print('Gaussian Mixed Model Clustering:')

vs.cluster_results(reduced_data, preds_GMM, centers_GMM, pca_samples)

I did Gaussian Mixed Model clustering as well. The result of clustering is slightly different from K-Means. We need note EM algorithm actually is soft assignment. But based on the probability, sklearn predicts the points to the cluster for which the probability is the largest, so that we can visualize it similar to K-Means.

Implementation: Data Recovery¶

Each cluster present in the visualization above has a central point. These centers (or means) are not specifically data points from the data, but rather the averages of all the data points predicted in the respective clusters. For the problem of creating customer segments, a cluster's center point corresponds to the average customer of that segment. Since the data is currently reduced in dimension and scaled by a logarithm, we can recover the representative customer spending from these data points by applying the inverse transformations.

In the code block below, you will need to implement the following:

- Apply the inverse transform to

centersusingpca.inverse_transformand assign the new centers tolog_centers. - Apply the inverse function of

np.logtolog_centersusingnp.expand assign the true centers totrue_centers.

# TODO: Inverse transform the centers

log_centers = pca.inverse_transform(centers)

# TODO: Exponentiate the centers

true_centers = np.exp(log_centers)

# Display the true centers

segments = ['Segment {}'.format(i) for i in range(0,len(centers))]

true_centers = pd.DataFrame(np.round(true_centers), columns = data.keys())

true_centers.index = segments

display(true_centers)

Question 8¶

Consider the total purchase cost of each product category for the representative data points above, and reference the statistical description of the dataset at the beginning of this project. What set of establishments could each of the customer segments represent?

Hint: A customer who is assigned to 'Cluster X' should best identify with the establishments represented by the feature set of 'Segment X'.

display(np.exp(good_data).describe())

print('True Centers offset from mean of dataset:')

display(true_centers - np.round(np.exp(good_data).mean()))

print('True Centers offset from median of dataset:')

display(true_centers - np.round(np.exp(good_data).median()))

Answer:

We got the centorid of each cluster, compare their establishments to the statistical description each of the original feature.

Segment 0: has low Fresh spending, high Milk spending, high Grocery spending, low Frozen spending, high Detergents_Paper spending and medium Delicatessens.

Segment 1: has medium Fresh spending, low Milk spending, low Grocery spending, medium Frozen spending, low Detergents_Paper spending and low Delicatessen.

It looks like segment 1 is small business client, like cafe shop, small restaurant, etc. While segment 0 is large business client, like grocery store, retails store, large restaurant.

Question 9¶

For each sample point, which customer segment from Question 8 best represents it? Are the predictions for each sample point consistent with this?

Run the code block below to find which cluster each sample point is predicted to be.

# Display the predictions

for i, pred in enumerate(sample_preds):

print "Sample point", i, "predicted to be in Cluster", pred

print("Show samples:")

display(samples)

print("Show segments centroids:")

display(true_centers)

Answer:

All these three samples are clustered to segment 1. From previous PCA, we know the most important four features are: Detergents_Paper, Grocery, Milk, Delicatessen. Compare the three samples to the segments centroids, the values of those four features are more close to the centorid of segment 1.

The predictions are consistent with the clustering segment.

Notes: In this case we did PCA first and then implemented K-Means and GMM clustering. Sometimes do PCA first is good for clustering as it can suppress the noise. Check more discussions on Quora.

Conclusion¶

In this final section, you will investigate ways that you can make use of the clustered data. First, you will consider how the different groups of customers, the customer segments, may be affected differently by a specific delivery scheme. Next, you will consider how giving a label to each customer (which segment that customer belongs to) can provide for additional features about the customer data. Finally, you will compare the customer segments to a hidden variable present in the data, to see whether the clustering identified certain relationships.

Question 10¶

Companies will often run A/B tests when making small changes to their products or services to determine whether making that change will affect its customers positively or negatively. The wholesale distributor is considering changing its delivery service from currently 5 days a week to 3 days a week. However, the distributor will only make this change in delivery service for customers that react positively. How can the wholesale distributor use the customer segments to determine which customers, if any, would react positively to the change in delivery service?

Hint: Can we assume the change affects all customers equally? How can we determine which group of customers it affects the most?

Answer:

As our task is to find out which type of customer is more unsatisfactory about the new delivery service, we can do A/B test on customers in each of the segment we classified.

For each segment, we randomly split the customers into two groups, one group choose original delivery service, another one choose new delivery service. Then test whether satisfaction of two groups are significant different. If the difference between two groups within one segment are significant different, then we can conclude this type of customer is more likely to complain, and we should keep the originall delivery service. Vise versa.

Then we can decide whether there is one type of customer is affected more by the new delivery.

Question 11¶

Additional structure is derived from originally unlabeled data when using clustering techniques. Since each customer has a customer segment it best identifies with (depending on the clustering algorithm applied), we can consider 'customer segment' as an engineered feature for the data. Assume the wholesale distributor recently acquired ten new customers and each provided estimates for anticipated annual spending of each product category. Knowing these estimates, the wholesale distributor wants to classify each new customer to a customer segment to determine the most appropriate delivery service.

How can the wholesale distributor label the new customers using only their estimated product spending and the customer segment data?

Hint: A supervised learner could be used to train on the original customers. What would be the target variable?

Answer:

We could set the "customer segment" as the target variable, and use any classfication algorithm like KNN, logistic regression, etc to predict the label of new customers.

Visualizing Underlying Distributions¶

At the beginning of this project, it was discussed that the 'Channel' and 'Region' features would be excluded from the dataset so that the customer product categories were emphasized in the analysis. By reintroducing the 'Channel' feature to the dataset, an interesting structure emerges when considering the same PCA dimensionality reduction applied earlier to the original dataset.

Run the code block below to see how each data point is labeled either 'HoReCa' (Hotel/Restaurant/Cafe) or 'Retail' the reduced space. In addition, you will find the sample points are circled in the plot, which will identify their labeling.

# Display the clustering results based on 'Channel' data

#vs.channel_results(reduced_data, outliers, pca_samples)

vs.channel_results(reduced_data, outlier_MoreThanOneFeature, pca_samples)

Supplement:

We also visualized the high dimension data using t-SNE based on the Channel variable. It shows those two categoris separate well in t-SNE.

# Use t-SNE to visualize the data based on 'Channel'

full_data = pd.read_csv("customers.csv")

Channel = full_data['Channel']

Channel.drop(outlier_MoreThanOneFeature, inplace=True)

Channel = Channel.reset_index(drop=True)

Channel = Channel.apply(lambda x: 'Retail' if x==2 else 'Horeca')

from sklearn.manifold import TSNE

model = TSNE(n_components=2, random_state=0)

np.set_printoptions(suppress=True)

tsne_data = model.fit_transform(good_data)

data_plot = pd.concat([pd.DataFrame(tsne_data), Channel], axis=1)

data_plot.columns = ['x', 'y', 'Channel']

sns.lmplot(x='x', y='y', data =data_plot, hue='Channel', fit_reg=False)

plt.xlabel('Dimension 1', fontsize=12)

plt.ylabel('Dimension 2', fontsize=12)

plt.title('t-SNE', fontsize=16)

Question 12¶

How well does the clustering algorithm and number of clusters you've chosen compare to this underlying distribution of Hotel/Restaurant/Cafe customers to Retailer customers? Are there customer segments that would be classified as purely 'Retailers' or 'Hotels/Restaurants/Cafes' by this distribution? Would you consider these classifications as consistent with your previous definition of the customer segments?

Answer:

The number of customer labels is two, which is the same as the best number of clusters we chose. Also the underlying distribution matches the the result of clustering algorithm very well.

The Segment 0 corresponds Retail, and Segment 1 corresponds HoReCa. Although there are a few points fall into the error cluster according to the true label, the overall classification is consistent with the previous definition of the customer segments.

Note: Once you have completed all of the code implementations and successfully answered each question above, you may finalize your work by exporting the iPython Notebook as an HTML document. You can do this by using the menu above and navigating to

File -> Download as -> HTML (.html). Include the finished document along with this notebook as your submission.

Comments

comments powered by Disqus